What is intercoder reliability?

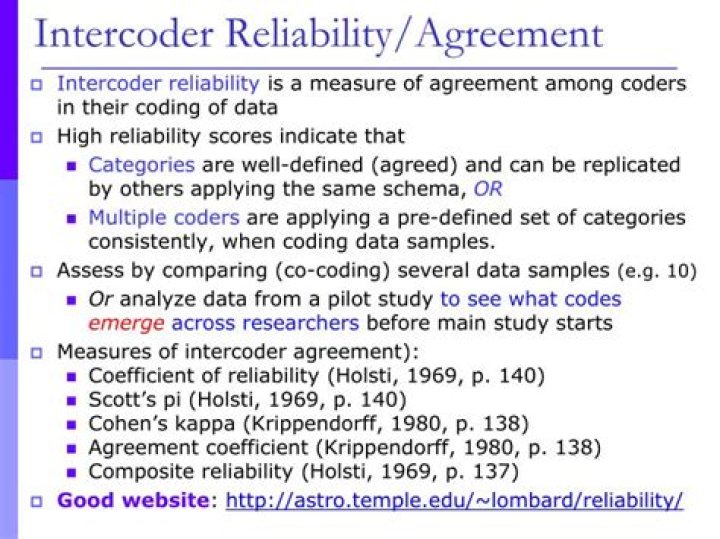

Intercoder reliability is the widely used term for the extent to which independent coders evaluate a characteristic of a message or artifact and reach the same conclusion. (Also known as intercoder agreement, according to Tinsley and Weiss (2000).

How is intercoder reliability measured?

Intercoder reliability = 2 * M / ( N 1 + N 2 ) . In this formula, M is the total number of decisions that the two coders agree on; N1 and N2 are the numbers of decisions made by Coder 1 and Coder 2, respectively. Using this method, the range of intercoder reliability is from 0 (no agreement) to 1 (perfect agreement).

What is intercoder reliability in qualitative research?

Intercoder reliability is the extent to which 2 different researchers agree on how to code the same content. It’s often used in content analysis when one goal of the research is for the analysis to aim for consistency and validity.

What is a good interrater reliability score?

There are a number of statistics that have been used to measure interrater and intrarater reliability….Table 3.

| Value of Kappa | Level of Agreement | % of Data that are Reliable |

|---|---|---|

| .60–.79 | Moderate | 35–63% |

| .80–.90 | Strong | 64–81% |

| Above.90 | Almost Perfect | 82–100% |

What is the minimum acceptable value of Intercoder reliability statistics?

McHugh says that many texts recommend 80% agreement as the minimum acceptable interrater agreement. As a suggestion, I recommend you also calculate the confidence interval for Kappa. Sometimes, only the kappa score is not enough to assess the degree of agreement of the data.

How do you establish Intercoder reliability?

Two tests are frequently used to establish interrater reliability: percentage of agreement and the kappa statistic. To calculate the percentage of agreement, add the number of times the abstractors agree on the same data item, then divide that sum by the total number of data items.

What is Interjudge?

Interjudge reliability. in psychology, the consistency of measurement obtained when different judges or examiners independently administer the same test to the same individual.

Why Interjudge reliability is important?

The importance of rater reliability lies in the fact that it represents the extent to which the data collected in the study are correct representations of the variables measured. Measurement of the extent to which data collectors (raters) assign the same score to the same variable is called interrater reliability.