What is curse of dimensionality explain with an example?

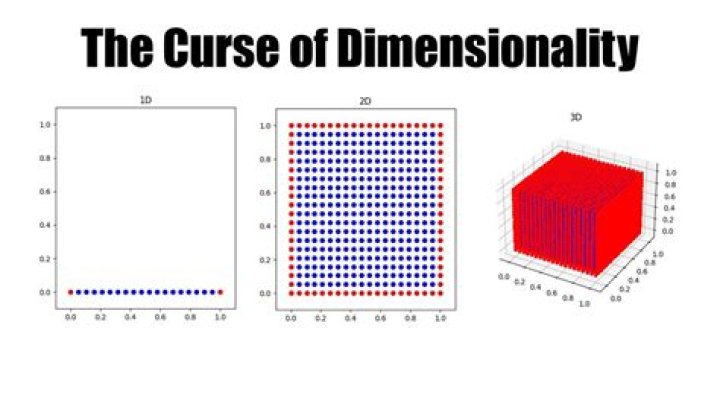

The curse of dimensionality refers to various phenomena that arise when analyzing and organizing data in high-dimensional spaces that do not occur in low-dimensional settings such as the three-dimensional physical space of everyday experience. The expression was coined by Richard E.

What is meant by curse of dimensionality?

Definition. The curse of dimensionality, first introduced by Bellman [1], indicates that the number of samples needed to estimate an arbitrary function with a given level of accuracy grows exponentially with respect to the number of input variables (i.e., dimensionality) of the function.

What causes curse of dimensionality?

The Curse of Dimensionality is termed by mathematician R. According to him, the curse of dimensionality is the problem caused by the exponential increase in volume associated with adding extra dimensions to Euclidean space.

What is the curse of dimensionality Why is dimensionality reduction even necessary?

It reduces the time and storage space required. It helps Remove multi-collinearity which improves the interpretation of the parameters of the machine learning model. It becomes easier to visualize the data when reduced to very low dimensions such as 2D or 3D.

Does curse of dimensionality cause Overfitting?

Overfitting and Underfitting KNN is very susceptible to overfitting due to the curse of dimensionality. Curse of dimensionality also describes the phenomenon where the feature space becomes increasingly sparse for an increasing number of dimensions of a fixed-size training dataset.

How does the curse of dimensionality reduce in data mining?

you can reduce dimensionality by limiting the number of principal components to keep based on cumulative explained variance. The PCA transformation is also dependent on scale, so you should normalize your dataset first. PCA is a find linear correlations between the features given.

What is the purposes of dimensionality reduction?

Dimensionality reduction refers to techniques for reducing the number of input variables in training data. When dealing with high dimensional data, it is often useful to reduce the dimensionality by projecting the data to a lower dimensional subspace which captures the “essence” of the data.

How curse of dimensionality relates to Overfitting?

KNN is very susceptible to overfitting due to the curse of dimensionality. Curse of dimensionality also describes the phenomenon where the feature space becomes increasingly sparse for an increasing number of dimensions of a fixed-size training dataset. This notion is closely related to the problem of overfitting.

Why is high dimensionality bad?

When is Data High Dimensional and Why Might That Be a Problem? When we have too many features, observations become harder to cluster — believe it or not, too many dimensions causes every observation in your dataset to appear equidistant from all the others.

How does the curse of dimensionality affect Knn?

KNN is very susceptible to overfitting due to the curse of dimensionality. Curse of dimensionality also describes the phenomenon where the feature space becomes increasingly sparse for an increasing number of dimensions of a fixed-size training dataset.

Why dimensionality reduction is important in data mining?

For an example you may have a dataset with hundreds of features (columns in your database). Then dimensionality reduction is that you reduce those features of attributes of data by combining or merging them in such a way that it will not loose much of the significant characteristics of the original dataset.

What happens when you use PCA for dimensionality reduction?

PCA helps us to identify patterns in data based on the correlation between features. In a nutshell, PCA aims to find the directions of maximum variance in high-dimensional data and projects it onto a new subspace with equal or fewer dimensions than the original one.