What is a good Fleiss kappa value?

Interpreting the results from a Fleiss’ kappa analysis

| Value of κ | Strength of agreement |

|---|---|

| 0.21-0.40 | Fair |

| 0.41-0.60 | Moderate |

| 0.61-0.80 | Good |

| 0.81-1.00 | Very good |

What does significant Kappa mean?

Cohen suggested the Kappa result be interpreted as follows: values ≤ 0 as indicating no agreement and 0.01–0.20 as none to slight, 0.21–0.40 as fair, 0.41– 0.60 as moderate, 0.61–0.80 as substantial, and 0.81–1.00 as almost perfect agreement.

Is Fleiss kappa weighted?

This extension is called Fleiss’ kappa. As for Cohen’s kappa no weighting is used and the categories are considered to be unordered.

How is Fleiss kappa calculated?

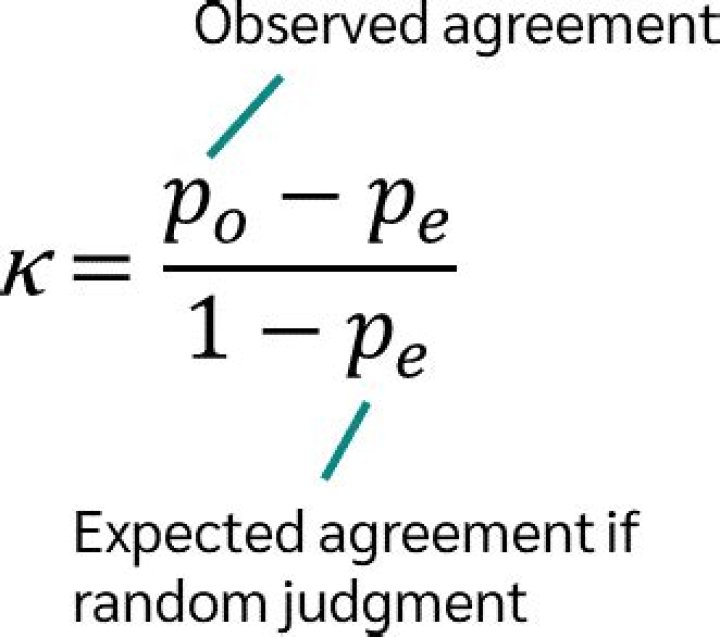

Note that the Fleiss’ Kappa in this example turns out to be 0.2099. The actual formula used to calculate this value in cell C18 is: Fleiss’ Kappa = (0.37802 – 0.2128) / (1 – 0.2128) = 0.2099.

What is Kappa machine learning?

In essence, the kappa statistic is a measure of how closely the instances classified by the machine learning classifier matched the data labeled as ground truth, controlling for the accuracy of a random classifier as measured by the expected accuracy.

What is Kappa Research?

Cohen’s kappa coefficient (κ) is a statistic that is used to measure inter-rater reliability (and also intra-rater reliability) for qualitative (categorical) items. There is controversy surrounding Cohen’s kappa due to the difficulty in interpreting indices of agreement.

What does P value for Kappa mean?

The p-value is a probability that measures the evidence against the null hypothesis. H 0 for Each Appraiser vs Standard: the agreement between appraisers’ ratings and the standard is due to chance. H 0 for Between Appraisers: the agreement between appraisers is due to chance.

What is Fleis?

Fleiss) is a statistical measure for assessing the reliability of agreement between a fixed number of raters when assigning categorical ratings to a number of items or classifying items. The measure calculates the degree of agreement in classification over that which would be expected by chance.

What is Kappa performance?

It basically tells you how much better your classifier is performing over the performance of a classifier that simply guesses at random according to the frequency of each class. Cohen’s kappa is always less than or equal to 1. Values of 0 or less, indicate that the classifier is useless.