How do you solve homoscedasticity?

Another approach for dealing with heteroscedasticity is to transform the dependent variable using one of the variance stabilizing transformations. A logarithmic transformation can be applied to highly skewed variables, while count variables can be transformed using a square root transformation.

How do you fix homoscedasticity in regression?

There are three common ways to fix heteroscedasticity:

- Transform the dependent variable. One way to fix heteroscedasticity is to transform the dependent variable in some way.

- Redefine the dependent variable. Another way to fix heteroscedasticity is to redefine the dependent variable.

- Use weighted regression.

How can homoscedasticity be violated?

Violation of the homoscedasticity assumption results in heteroscedasticity when values of the dependent variable seem to increase or decrease as a function of the independent variables. Typically, homoscedasticity violations occur when one or more of the variables under investigation are not normally distributed.

How do you fix heteroscedasticity?

Correcting for Heteroscedasticity One way to correct for heteroscedasticity is to compute the weighted least squares (WLS) estimator using an hypothesized specification for the variance. Often this specification is one of the regressors or its square.

How do you test for homoscedasticity?

Testing. Residuals can be tested for homoscedasticity using the Breusch–Pagan test, which performs an auxiliary regression of the squared residuals on the independent variables.

Is homoscedasticity good or bad?

Homoscedasticity does provide a solid explainable place to start working on their analysis and forecasting, but sometimes you want your data to be messy, if for no other reason than to say “this is not the place we should be looking.”

How do you check homoscedasticity assumptions?

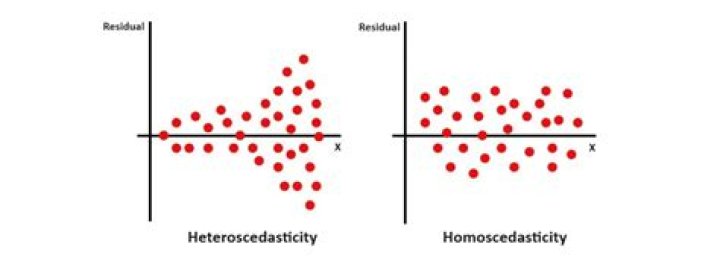

The last assumption of multiple linear regression is homoscedasticity. A scatterplot of residuals versus predicted values is good way to check for homoscedasticity. There should be no clear pattern in the distribution; if there is a cone-shaped pattern (as shown below), the data is heteroscedastic.

What happens if OLS assumptions are violated?

The Assumption of Homoscedasticity (OLS Assumption 5) – If errors are heteroscedastic (i.e. OLS assumption is violated), then it will be difficult to trust the standard errors of the OLS estimates. Hence, the confidence intervals will be either too narrow or too wide.

What happens if regression assumptions are violated?

Violating multicollinearity does not impact prediction, but can impact inference. For example, p-values typically become larger for highly correlated covariates, which can cause statistically significant variables to lack significance. Violating linearity can affect prediction and inference.

What causes heteroskedasticity?

Heteroscedasticity is mainly due to the presence of outlier in the data. Outlier in Heteroscedasticity means that the observations that are either small or large with respect to the other observations are present in the sample. Heteroscedasticity is also caused due to omission of variables from the model.

Is heteroscedasticity good or bad?

Heteroskedasticity has serious consequences for the OLS estimator. Although the OLS estimator remains unbiased, the estimated SE is wrong. Because of this, confidence intervals and hypotheses tests cannot be relied on. Heteroskedasticity can best be understood visually.

Why do we check for homoscedasticity?

There are two big reasons why you want homoscedasticity: While heteroscedasticity does not cause bias in the coefficient estimates, it does make them less precise. Lower precision increases the likelihood that the coefficient estimates are further from the correct population value.

Which is the best way to fix heteroscedasticity?

One way to fix heteroscedasticity is to transform the dependent variable in some way. One common transformation is to simply take the log of the dependent variable.

How can I remove heteroskedasticity from my data?

I have used y BreuschPganGodfrey and white test to determine heteroskedasticity. Join ResearchGate to ask questions, get input, and advance your work. 1. Transforming the data into logs, that has the effect of reducing the effect of large errors relative to small ones and reducing the extreme value. 2.

When do we violate the assumption of homoscedasticity?

Heteroscedasticity (the violation of homoscedasticity) is present when the size of the error term differs across values of an independent variable. The impact of violating the assumption of homoscedasticity is a matter of degree, increasing as heteroscedasticity increases.

Which is the best way to test for heterosedasticity?

Now that the model is ready, there are two ways to test for heterosedasticity: The plots we are interested in are at the top-left and bottom-left. The top-left is the chart of residuals vs fitted values, while in the bottom-left one, it is standardised residuals on Y axis.

One way to fix heteroscedasticity is to transform the dependent variable in some way. One common transformation is to simply take the log of the dependent variable.

Heteroscedasticity (the violation of homoscedasticity) is present when the size of the error term differs across values of an independent variable. The impact of violating the assumption of homoscedasticity is a matter of degree, increasing as heteroscedasticity increases.

I have used y BreuschPganGodfrey and white test to determine heteroskedasticity. Join ResearchGate to ask questions, get input, and advance your work. 1. Transforming the data into logs, that has the effect of reducing the effect of large errors relative to small ones and reducing the extreme value. 2.

How to know if a set of data has homoscedasticity?

When viewing a graph, it’s easier to look at the distances from the points to the line to determine if a set of data shows homoscedasticity. Technically, it’s the variance that counts, and that’s what you’d use in calculations.