How do you control Heteroscedasticity?

The idea is to give small weights to observations associated with higher variances to shrink their squared residuals. Weighted regression minimizes the sum of the weighted squared residuals. When you use the correct weights, heteroscedasticity is replaced by homoscedasticity.

What are the causes of heteroscedasticity?

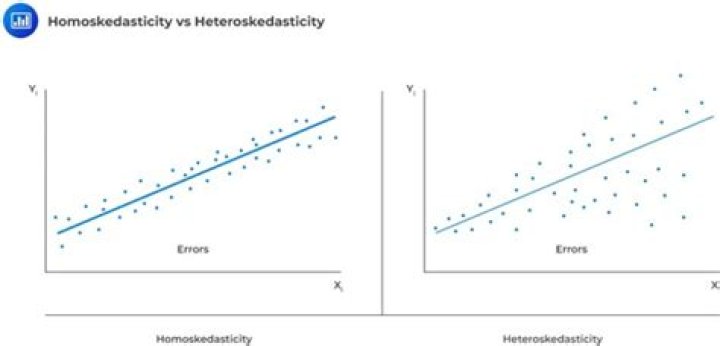

Heteroscedasticity is mainly due to the presence of outlier in the data. Outlier in Heteroscedasticity means that the observations that are either small or large with respect to the other observations are present in the sample. Heteroscedasticity is also caused due to omission of variables from the model.

How do you treat heteroscedasticity in regression?

Fix for heteroscedasticity One very popular way to deal with heteroscedasticity is to transform the dependent variable [2]. We can perform a log transformation on the variable and check again with White’s test. For demonstration, we removed some of the low values on the y-axis.

What are the consequences of autocorrelation?

The OLS estimators will be inefficient and therefore no longer BLUE. The estimated variances of the regression coefficients will be biased and inconsistent, and therefore hypothesis testing is no longer valid. In most of the cases, the R2 will be overestimated and the t-statistics will tend to be higher.

How are you going to detect autocorrelation?

Autocorrelation is diagnosed using a correlogram (ACF plot) and can be tested using the Durbin-Watson test. The auto part of autocorrelation is from the Greek word for self, and autocorrelation means data that is correlated with itself, as opposed to being correlated with some other data.

What causes impure heteroskedasticity?

Impure Heteroskedasticity This type of heteroskedasticity is caused by a specification error such as an omitted variable.

Does correlation cause heteroskedasticity?

if there is serial correlation, you’re assuming weak stationarity, and so heteroskedasticity is impossible.